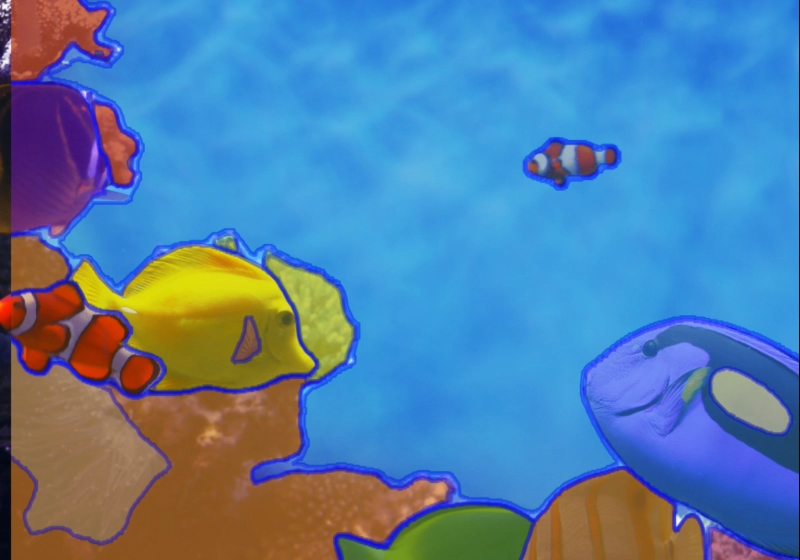

Computer vision has seen massive advancements, but most segmentation models still need fine-tuning for specific tasks. Meta’s Segment Anything Model (SAM) changes that. SAM is a foundation model for image segmentation, capable of segmenting anything in an image with minimal effort—no retraining needed.

SAM follows a promptable segmentation approach. You provide an input (a point, a box, or freeform text), and the model segments the relevant object instantly. It has been trained on millions of images across diverse domains, making it highly generalizable.

Use Cases

Autonomous Systems – In robotics and self-driving tech, SAM can quickly identify objects in dynamic environments, improving real-time decision-making.

Medical Imaging – Helps segment organs or anomalies in scans without requiring extensive labeled datasets.

Retail & E-commerce – Automatically isolates products from backgrounds, streamlining inventory management and AR try-ons.

Agriculture – Identifies crops, pests, and diseases from aerial or drone imagery, optimizing yield predictions.

Leave a Reply